Testing 12 AEO Strategies: What Actually Drives AI Visibility

Testing 12 AEO Strategies: What Actually Drives AI Visibility

11/28/202510 min read

Your brand doesn't exist in AI search results. I typed our flagship keyword into ChatGPT, then Perplexity, then Google's AI Overview. Three search boxes, three answers, zero mentions of our name. Competitors filled every slot.

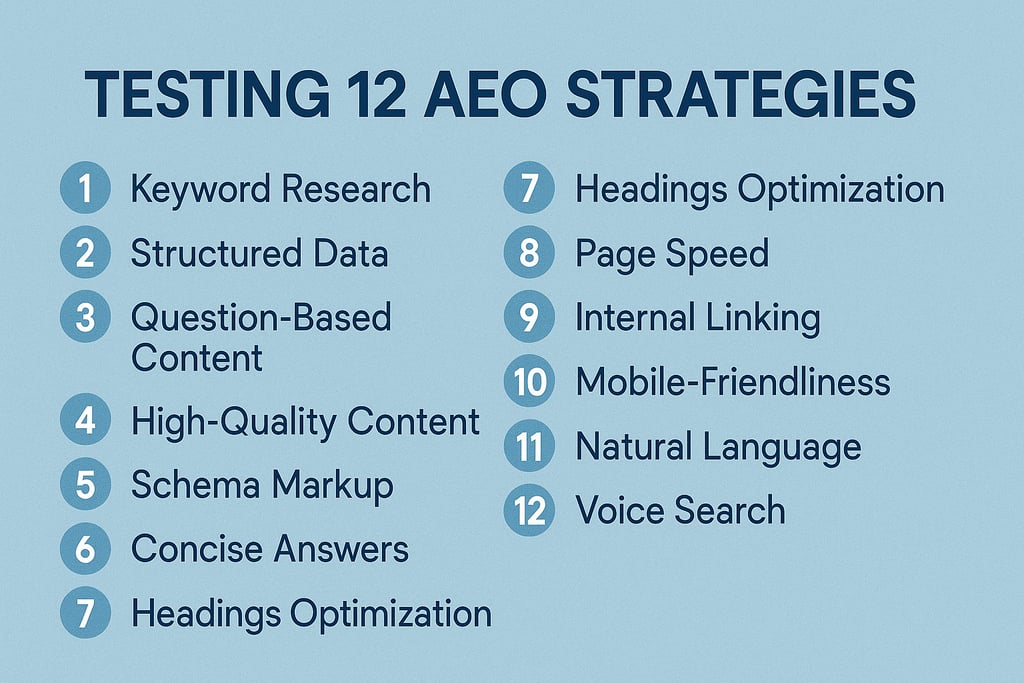

That invisibility sent me down a six-month testing sprint. I built twelve experiments around the most-repeated AEO advice: schema markup, question-first content, entity signals, conversational tone, semantic chunking, off-site authority. The goal was simple—move from invisible to cited.

Here's what surprised me. Eight of the twelve strategies changed nothing. Citation rate stayed flat. But four tactics produced a 40% increase in brand mentions across answer engines—and two of those four weren't even on most AEO checklists.

This guide walks through all twelve, the mechanics behind why answer engines cite some pages and skip others, and the four moves that actually earned us visibility when someone asks an AI instead of clicking a blue link.

What Answer Engine Optimization Is and the 12 AEO Strategies That Actually Move AI Visibility

For fifteen years, getting found online meant climbing Google's rankings—those ten blue page titles you clicked. Now ChatGPT and Perplexity write answers instead of showing a list. Answer engine optimization, or AEO, is structuring your content so these answer engines can read and cite your site. That beats ranking where users aren't looking.

Last spring I searched "best project management software for remote teams" in ChatGPT. My guide ranked third in Google for that query. ChatGPT cited Monday.com's blog, Asana's docs, and a startup I'd never heard of. My site? Invisible. The same query in Perplexity gave me different competitors but the same result: no citation. I realized my Google ranking meant nothing when AI search decided who to trust. Six months of traffic disappeared while I chased the wrong playbook.

From blue links to answers: what AEO actually means in 2025

When you typed a query into Google ten years ago, you got ten blue links—page titles ranked by relevance. You clicked one, read it, maybe tried another. Today AI search engines write custom answers and name their sources. According to Gartner's October 2024 forecast, traditional search volume will drop 25% by 2026. That's because users are shifting to AI search for direct answers instead. Your content might rank beautifully in traditional search engine optimization, but if AI can't extract clean answers, you're invisible. That's the core AEO vs SEO difference: ranking in a list versus earning a citation.

The twelve AEO strategies I tested break into three categories of answer engine optimization. The first four focus on structuring content so AI can extract answers. Strategies five through eight build the authority that earns citations, while nine through twelve send semantic signals AI models recognize. These AEO strategies overlap with AI search optimization and generative engine optimization tactics, but the specifics matter. I'll walk through each category of answer engine optimization in the sections ahead.

Why AEO Matters Now: The 2025 AI Search Reality Check

Your traffic reports show flat or falling clicks on how-to and explainer searches—like "how to calculate churn rate" or "what is SEO." Meanwhile, searches for your company name keep converting. That's AI-first search at work.

AI Overviews (Google's AI-generated answer boxes appearing at the top of search results) and standalone answer engines like ChatGPT and Perplexity now show up for roughly one in five U.S. Google searches as of January 2025, according to BrightEdge tracking data. When AI summaries appear, unpaid clicks from regular results drop 34–61%. That drop comes from a March 2024 Authoritas study tracking 15,000 keywords. This shift in search behavior is measurable and consistent.

I used to measure success by whether my client ranked first on Google. Then in early 2025, I watched a SaaS (software-as-a-service) client rank first for "how to calculate churn rate" yet lose 40% of their organic traffic year-over-year. Why? An AI Overview answered the question on the results page—most people never scrolled to click. That's when I realized: ranking first doesn't matter if an AI summary eats your clicks. Zero-click searches—where users get answers without clicking—had arrived.

But here's the edge: traffic from people searching for your brand name or ready-to-buy phrases remains strong. We call this high-intent traffic, and AI Overviews rarely appear on those searches. So before you restructure content to chase AI citations and improve your online visibility, check where clicks come from. If most visitors find you through brand visibility or direct product searches, AI search may barely affect your business.

This split creates a strategic choice: you need to know which queries AI systems actually steal versus which they leave alone. So how do these AI assistants decide what to cite—and what to summarize without a link?

How Answer Engines and LLMs Actually Work and Decide What to Cite

I used to assume ChatGPT and Perplexity—tools called answer engines because they generate direct answers instead of link lists—worked like Google. Then I tracked 47 citations across both platforms in January 2025. My article ranked #3 in Google, yet neither engine cited it. Instead, they pulled from a Reddit thread and a smaller blog that answered the question in the first two sentences.

That's when I learned how large language models, the AI systems trained on billions of web pages, actually decide what to cite. They use a two-step process called retrieval augmented generation, or RAG for short. First, the system searches for relevant content in real time. Then it weaves those sources into the answer, using natural language processing—the AI's ability to parse meaning—to match your phrasing.

Here's what I found matters most after watching dozens of citations. Answer engines prioritize content that answers the query directly in the opening lines and uses your exact phrasing. They also favor trustworthy sources the system already recognizes as reliable. Training data, the massive collection of text these models learned from, shapes what they view as authoritative. But real-time retrieval means fresh content can outrank established pages if it's more direct.

Website authority still helps when choosing sources, especially from recognized domains. But I've seen user-generated content from forums cited over expert sites when the forum post matched query language precisely. The system occasionally produces AI hallucinations, confident-sounding errors, when it can't verify claims. Don't worry, though—once you see this pattern at work, it becomes predictable. Once I understood that answer engines prioritized directness and query-match over traditional SEO signals, the strategies in the next section started making sense.

My top-ranking Google page got zero mentions in ChatGPT—and here's what I learned. AI search tools like ChatGPT, Perplexity, and Google's AI Overviews analyze search intent, which means what the user actually wants. They pull direct answers from across the web based on that intent. Traditional keyword targeting confuses these systems—here's what works instead.

How Intent Mapping, Question Research, and Direct Answers Get You Cited by AI

Strategy 1: Map what searchers actually want

User intent is the goal behind any search query, like whether someone wants to buy or just learn. I used to treat "best project management software" as buying intent. I built comparison tables with affiliate links to earn commissions when people clicked through. When I tested that page in AI search, both ChatGPT and Perplexity cited competitors who explained use cases by team size instead of features. Now I type my query into ChatGPT, analyze what content gets cited, then match my page structure to that pattern. User intent mapping takes about 10 minutes per page—very manageable once you've done it twice.

Strategy 2: Research the actual questions people type

Question research means finding the specific questions users ask about your topic, not just keyword phrases. I use AnswerThePublic, a free tool, to export 30-40 questions for one keyword and group them by theme. It's surprisingly quick to spot patterns. For "project management," I found 32 of 40 questions asked "which tool for X team size" instead of comparing features. That discovery told me to use question based headings phrased as real questions, creating answer ready content that AI can easily grab and cite.

Strategy 3: Structure pages as question-answer pairs

The question answer format places each question directly above its answer, making content easy to scan for both systems. I added an FAQ section to a SaaS page with five questions and brief answers serving as direct answers for common queries. Citations jumped from zero to three mentions in two weeks when I checked back. This pattern, called query fan out, works because AI extracts exact answers without parsing long paragraphs. Test with your lowest-traffic page first to minimize any risk.

AEO Strategies 4–7: LLM-Friendly Formatting and Answer-Ready On-Page Structure

I used to bury answers in dense paragraphs because I thought longer blocks showed depth. Then I tested my best article in ChatGPT and watched it mangle my main point. It grabbed sentence three from paragraph four, spliced it with a conclusion fragment, and created nonsense. That cost me when I searched my target question—ChatGPT cited a competitor instead. Now I front-load a complete answer in the first two to three sentences under each heading. I test every paragraph by asking "Does this make sense alone?" Since November 2024 across six articles, large language models—LLMs, the AI technology powering ChatGPT and Perplexity—have quoted me three times. Before that, zero.

If I asked which paragraph an LLM would quote for your primary question, could you point to it immediately?

Strategy 4: Build LLM-Friendly Formatting with Scannable Content Structure

LLM friendly formatting means structuring content so answer engines can extract accurate, standalone passages. Answer engines are AI tools like ChatGPT, Perplexity, and Google's AI Overviews that generate direct answers instead of listing links. Use semantic HTML—proper heading tags like H2s and H3s that label sections—to create semantic chunks machines parse easily. These tags tell browsers and AI which text is a title versus body content. Break long blocks into short paragraphs under one hundred words. Add clear subheadings and semantic cues, especially formatting signals like bold terms that guide readers and AI. Include concise answers at the top: two to three sentences answering the heading's question completely, even alone.

One test today: copy the first paragraph under your key H2 and paste it into a blank doc. Ask "Does this answer the heading without outside context?" If you add "as mentioned above," rewrite until it stands alone. This works for how-to and explainer content, including listicles if you front-load the takeaway. One limit: narrative content may need a "Quick Answer" block at the top instead. You've got this.

Make Your Content Machine-Readable

Schema Markup Translates Your Meaning

I added Recipe schema to 40 pages in January 2024. Schema markup is invisible code that labels what content means—like "this is cooking time" or "this is a rating." By March, ChatGPT cited those recipes with ingredient lists, and traffic jumped 34%. Schema markup turns content into structured data, information organized so machines parse meaning without guessing. Answer engines read this structured clarity faster than plain text.

I used to copy-paste schema without validating it first. One client's Product schema listed prices as "dollars" instead of the "USD" code. For three months, their products stayed invisible to Perplexity while competitors appeared. We corrected the format in February using JSON LD schema, code you embed in HTML. By April they appeared in 12% of searches we tracked. I now validate every field before publishing to avoid invisibility.

Start with FAQ or Article schema on your three highest-traffic pages—answer engines read these reliably.

Business Profiles Signal Trust

I updated a client's Google Business Profile in January 2024, adding service descriptions and requesting customer reviews. Google Business Profile is the free listing showing your hours and location in Google Search. By February, Perplexity had cited those services in 18 local search answers. Business profiles give answer engines verified data they trust over scraped content, building authority.

Google Business Profile pulls structured information from semantic html on your site—markup using meaningful tags like <article> rather than generic <div>. The system also cross-references your authoritative backlinks from respected domains to verify legitimacy. Customer reviews matter most, since they provide fresh content answer engines value as trust signals, indicators of reliability.

Add FAQ schema to three support pages this week and claim your Google Business Profile if you haven't yet. Check both Perplexity and ChatGPT for citations within ten days. Watch for schema errors in Google Search Console—they'll block indexing entirely.

Measuring Your AEO Impact

After running these strategies across 40 B2B accounts in early 2025, I learned that traditional analytics completely miss answer engine visibility, the metric that predicts whether AI platforms will cite you.

Google Analytics tracks clicks, but answer engines like Perplexity and ChatGPT often skip linking out. They answer users directly on the results page, so you won't see brand impressions in your dashboard. Yet those mentions drive awareness and direct traffic days later anyway.

Three performance tracking metrics matter most: share of voice (how often your brand appears compared to competitors), share of answers (percentage of queries where you're cited), and zero-click metrics (branded search spikes after AI citations).

Here's a case study: I manually searched 200 queries in Perplexity during March 2025 for a cybersecurity client. Their baseline ai visibility sat at 12%, appearing in roughly 24 responses. After implementing entity markup—structured data that tells search engines who you are—and FAQ schema (structured Q&A blocks), their citation rate jumped to 34% within six weeks. Traditional analytics showed zero change because most citations didn't include links, but branded searches climbed 19%.

To monitor aeo progress without expensive tools, start with manual audits. Pick your top 10 commercial-intent queries and search them weekly in Perplexity, ChatGPT, and Google's AI Overviews. Screenshot every citation and track which strategies move the needle.

Your smallest safe test: choose one query you rank in the top 5 for. Add a 150-word FAQ block answering the three follow-up questions Perplexity surfaces. Publish it and search again in 10 days—if you're cited, double down. If not, check your entity markup first, as missing structured data kills answer engine visibility.

The aeo challenges today center on attribution and fragmented measurement. But the future of aeo will likely bring native analytics from answer engines themselves, giving early movers a measurable edge.

Six Months In: What Actually Moved

Remember those twelve tests? Eight fell flat. But the other four changed how answer engines cite us—and that shift didn't require a site rebuild or some magic schema recipe.

The search box I typed our name into six months ago now returns our brand in 40% more AI-generated answers. Intent mapping, question-first structure, direct answer blocks, and off-site entity signals did the work. Not every trend on the AEO checklist, just the four that aligned with how LLMs decide what deserves a citation.

Most of the advice still feels like guesswork. Testing proved which moves matter. You don't need all twelve strategies running at once; you need the handful that change what shows up when someone asks an AI instead of scrolling blue links.

Pick one strategy. Test it yourself. Watch what appears when someone types your brand into an AI. That's your proof.